1. Introduction

The Internet has become increasingly social due to the rise of Web 2.0. There are many different social features implemented in websites, (mobile) applications, and on Internet platforms. These features enable us to continuously communicate with – but also keep track of – our friends, distant relatives, or total strangers. However, the productivity changes remain unclear (Dabbish et al. 2012; Langlois 2011, 2009).

GitHub’s mission to make coding social should be understood as an effort to make collaboration technical. As a digital platform, GitHub1 is engineering and manipulating the way we connect (cf. van Dijck 2013); collaboration, reputation, innovation, sharing, community, and so on, become coded concepts defined by the owners of the platform. Software developers use GitHub to collaboratively organise the development of projects that span over temporal and spatial distance. With over three million users,2 GitHub has grown into the largest web-based code-hosting platform. It is important that we should think about how we can understand the platforms we build, for they become constitutive of the way we think, interact, and relate to each other. GitHub determines not only how and what we learn, but also who we learn with, our understanding of innovation, or the way we coordinate our efforts towards a wide variety of shared goals.

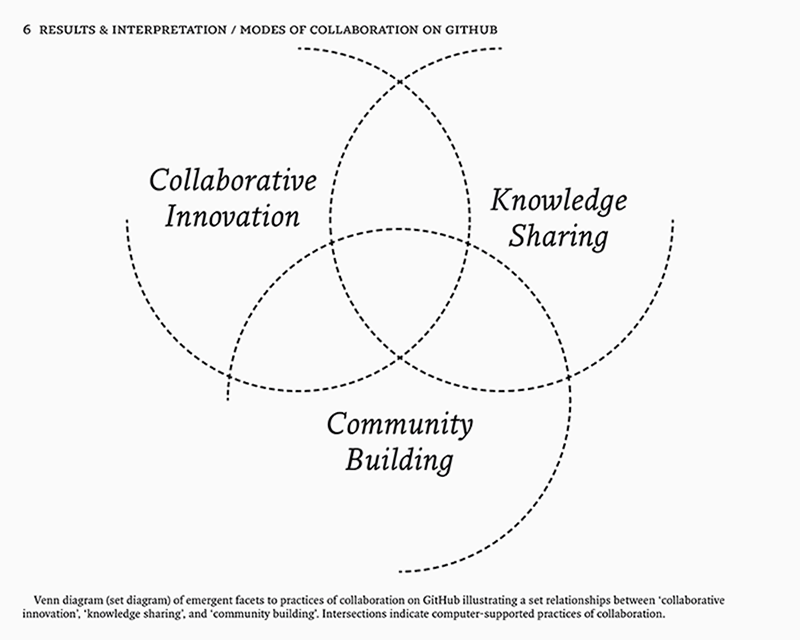

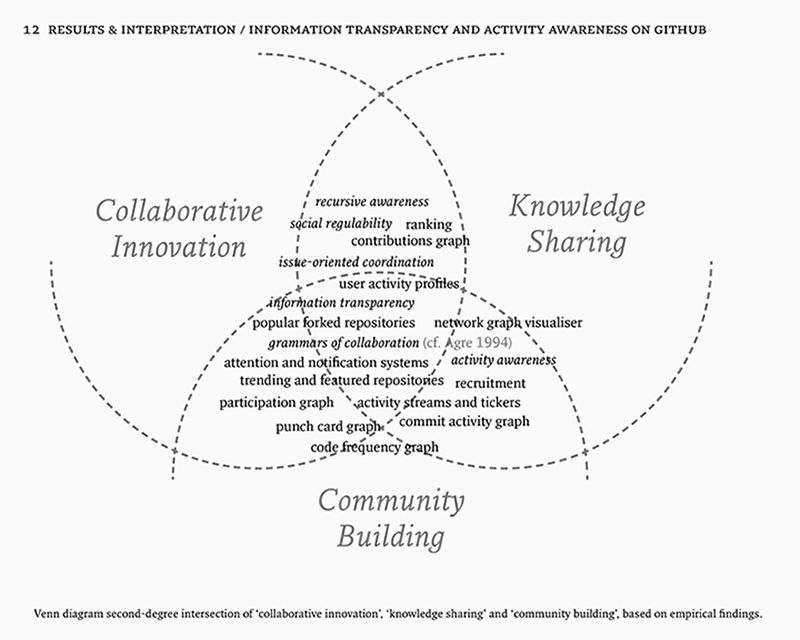

The approaches now known as software studies and platform studies aim to describe these software platforms and mechanisms and try to make sense of them (Fuller 2008; Bogost and Montfort 2009). The perspective of platform technicity in particular seems to offer a fertile ground to look into the many ways technical rationalities and infrastructures structure and govern the relations between humans and their technical supports (Bucher 2012; Niederer and van Dijck 2010; Crogan and Kennedy 2009). By addressing the following research question, we may advance our understanding of how specific material arrangements like GitHub can enable, capture and augment computer-supported collaborative innovation, knowledge building and community building: in what way is the technicity of collaboration on GitHub co-constitutive of computer-supported collaborative innovation, knowledge sharing, and community building? This question is approached from three different, but intertwined angles. Git, the main underlying infrastructure, is first approached as a technical foundation or infrastructure supporting further layers of the GitHub platform that are discussed in the subsequent sections. Second, the practice of collaboration on GitHub is considered by looking into the way specific features augment or serve to deal with particular strengths and shortcomings of Git, and what that means for collaboration. Third, I discuss some of the distinctive features on GitHub that produce activity awareness by constituting an information transparent environment.

2. Theoretical Framework

The following is a reflection on the broader conceptual frameworks to which this research contributes. With a main focus on understanding the “technicity” (Crogan and Kennedy 2009)3 of collaboration on GitHub, this research is situated somewhere between the academic disciplines of software studies (Fuller 2008),4 platform studies (Bogost and Montfort 2009),5 and digital methods (Rogers 2012, 2009).6 As such, it is grounded in the philosophical tradition of the digital humanities (Berry 2011, 2012).

As defined by Ian Bogost and Nick Montfort, a platform is: “a computing system of any sort upon which further computing development can be done. It can be implemented entirely in hardware, entirely in software (which runs on any of several hardware platforms), or in some combination of the two” (2009). In their view, a platform often contains other platforms, which is a useful way of thinking about software and platforms from a perspective of technicity analysis. The notion of technicity embodies the key approach of this research to understand the techno-social structure of GitHub. Bucher (2012, 4), and Crogan and Kinsley (2012, 22) both take this concept to be “the inherent, coconstitutive milieu of relations between the human and their technical supports, agents, supplements (never simply their ‘objects’)” (Crogan and Kennedy 2009, 109). Technicity is why software can be productive (i.e. “do work” in the world), why it can make things happen. It marries the technical object to the context of which it is part, which is to say that they become mutually constitutive.7 The question of what collaboration is then becomes how collaboration becomes.8 In many ways, this approach answers to Geoffrey C. Bowker and Susan Leigh Star’s call to start taking infrastructures seriously (1999). Infrastructure is defined as a relational concept, embedded in other structures, social arrangements, and technologies (35).9 Technicity takes this understanding of infrastructures to the domain of software and platform studies by focusing on the constitutive relations between the technical infrastructures and – in a broad sense – specified human actions. With this conceptual and structural frame in mind, I explore the implications on computer-supported collaborative innovation, knowledge sharing, and community building.

These perspectives are all still in their infancy and in addition to their short existence, the research on software and digital platforms also seems disproportionate to the increasingly pervasive role they play in our (everyday) lives. It has become difficult to even imagine a world without software. This is not just a consequence of being so dependent on automated support mechanisms for our regular activities, but more importantly of a lack of understanding of “the stuff of software” (Fuller 2008) in spite of this dependence. As a result, this research draws heavily from a variety of other disciplines such as software engineering, artificial intelligence, organisation studies and other categories of computer and information science. This way we may be able to bridge some of the gaps between technical engineering, development, implementation and maintenance on the one hand, and thorough analytical and critical understanding of their implications on the other.

2.1. Distributed Computer Systems

GitHub was founded in April 200810 and uses Git as system for version control. Git follows the logic of a distributed computer system, so it is necessary to consider this in terms of implications for any additional layers of software and interaction. Previous research and development of distributed computer systems and networks has resulted in broad adoption and implementation (Milenkovic 2003).11 Peer-to-peer networks and file-sharing practices have emerged and caused scholars to rethink these systems and networks not only on the level of technical infrastructure, but also as models of cultural production, cooperation and distribution (Bauwens 2005).12 A recent example of such a discussion centred on version control systems. Centralised version control systems (CVCS) such as Subversion are gradually being replaced by distributed version control systems (DVCS) such as Git, Mercurial, or Bazaar, for they are much more flexible and able to scale in administration, size and spatial distance (Tanenbaum and van Steen 2007).13 This spawned discussion on the pros and cons of centralised versus distributed models of version control, how the actual work process is transformed because of this, and specific issues that follow from migrating between two such systems (de Alwis and Silito 2009).

2.2. Computer-Supported Cooperative Work

Computer-Supported Cooperative Work (CSCW) repeatedly surfaces as the key site of debate for computer-supported group work and its issues especially before the rise of the Web 2.0 era of platforms and peer-production. CSCW started as an interdisciplinary effort to shed light on supporting group activity (Grudin 1994, 19–20).14 Irene Greif and Paul M. Cashman first coined the term in a workshop (1984) on the role that technology could play in human group work (Grudin 1994), and was later defined by Peter H. Carstensen and Kjeld Schmidt to describe “how collaborative activities and their coordination can be supported by means of computer systems” (1999, qtd. in Itoh 2003, 620). Most of the previous research on CSCW that is relevant here stems from computer science in the eighties and nineties (Grudin 1988),15 and now seems to continue in the slightly different context of social computing implementations.

Social computing implementations can deal with some specific issues that could not be resolved before, even though these had been identified at the time (Schmidt 2002). These are issues of coordination such as lack of awareness and workspace transparency that follow from the move from local (i.e. with physical proximity to other members) to networked (i.e. where members are physically scattered) group working environments (Gutwin et al. 1996; Gutwin and Greenberg 2002; Pinelle et al. 2003).16 This has caused a general shift in development style of open source software from the hierarchical “cathedral” model to something that resembles a “bazaar” (Raymond 1998), resulting in different categories of collaboration such as episodic and continuous collaboration (Rigby et al. 2010; section 4.3.) and the emergence of the endless beta stage of development to harness this collective intelligence (O’Reilly 2005). In this “neodemocratic” environment, different values are important that emphasise commitment to issues rather than information secrecy (Dean 2003). As a web-based code-hosting platform for social coding, GitHub has a very particular understanding of publicness. Ownership and influence become concerns of access and permission protocols17 (section 5.1.), causing a reconfiguration of the public and private (section 5.1.).

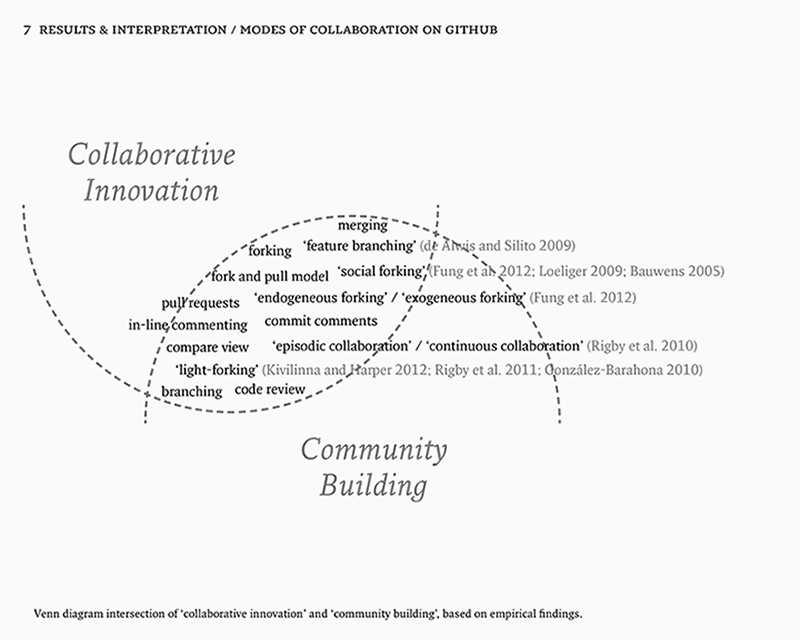

2.3. Social Computing in Open Source Software Development

Social computing has also been explored specifically in open source software development (Şahin et al. 2013; Dabbish et al. 2012; Begel et al. 2010; Fihin 2008). It is not just about notification systems, but also about how specific features actually become social. Social forking (Fung et al. 2012), “light-forks” (González-Barahona 2008; Rigby 2010) and merging make particular interesting cases in that they show how code and community are attached to each other (section 4.3., 5.3). Code is a social object among developers (Dodge and Kitchin 2011), emphasising the importance of “social learning” (Bandura 1977), and “code literacy” (Berry 2011; Hayles 2004) as a required skill to participate both as a developer and as a critic (section 5.2.). The key consequence of this is the implementation of an information-transparant environment that allows for activity awareness to both produce and uphold effective collaborations. The concepts of “capture” (Agre [1994] 2003) and “social inferences” (Dabbish et al. 2012) are useful as frameworks to make sense of this environment (section 6.1., 6.2).

Research on open source software (OSS) development (Raymond 1998)18 has only recently started to embrace the large amounts of data that enables researchers to empirically study software development at large scales and across communities (Mockus 2009). For example, by using data from user profiles, repository metadata from GitHub, Heller et al. (2011) were able to visualise collaboration and influence between developers on a map and identify patterns that would otherwise have remained obscured. This kind of empirical research relies entirely on the quality and size of the public datasets with data on profiles, activity, code, and repositories. The interconnections between the global and local (both geographically and as variables on the platform) need to be considered as well. David Redmiles, for example, explicitly chose to use the term “global software development” to distinguish it from local forms of software development (Redmiles et al. 2007). This should be considered both as a consequence scale-increases (Wilson 1996)19 and of migration across communities such as when code assemblages occur (Fung et al. 2012; section 4.2., 4.3).

3. Research Methodology

In order to address the research question, it needs to be separated into two parts. The first concerns understanding the platform technicity of GitHub, and the second part requires this understanding to make sense of collaboration in terms of innovation, knowledge sharing, and community building. To answer this twofold question, a methodology has been deployed that is similar to those of Bucher (2012), Niederer and van Dijck (2010), and Dabbish et al. (2012). Their research is similar in that Dabbish et al. develop an understanding of how users infer information and meaning from particular functions on GitHub. Bucher, Niederer and van Dijck, on the other hand, used their empirical findings to open up space for a critical perspective on Wikipedia’s standards. It is this particular intention in conducting empirical research that I believe is important.

The first part of the research question is descriptive, and is engaged as an empirical question. The aim is to develop and describe a functional and structural overview of GitHub to understand which of its many features are important to the research question, and how these features relate to each other. Functional description, in this case, means separating the elements and classifying them based on what they do (in the broadest sense). Structural description, on the other hand, focuses on navigational structure and content organisation. The structural becomes relevant when considering features in relation to one another.

To engage with this kind of description, one needs to explore and document features and how these are implemented into the system. The empirical corpus, then, consists of descriptions and discussions on the various implemented systems from blogs, previous research, and observation. In this regard, documents on GitHub are often useful. The GitHub team keeps their developer blogs20 public and up-to-date; GitHub’s help subdomain21 contains extensive description; legal documents such as terms of service,22 privacy and security statements23 are all publicly available; an analytics subdomain24 is dedicated solely to the platform’s status history; and user accounts are publicly accessible, including their detailed data such as activity statistics, project pages, repositories and open source code of the projects they have worked on.25 Description alone, however, will not suffice to really understand software. The second part of the research question therefore aims to extend this empirical foundation in order to make sense of the implications of this particular technicity of collaboration on three connected aspects. These aspects are computer-supported collaborative innovation, knowledge sharing, and community building. This means providing what Clifford Geertz and others26 have called “thick descriptions” (Geertz 1973, 3–30, ch. 1; Murdoch 1997, 91–98, ch. 9); describing both an empirical observation, and its broader implications or deeper meaning. This approach criticises “thin descriptions” of cultural ethnographic analysis that only give empirical findings without contextualising them properly.

In line with Bucher’s essay on the technicity of attention, this thesis will not be focusing on describing what collaboration is, nor will it be an exhaustive study of what all the features on GitHub exactly do. Rather, it will show how software has the capacity to produce and instantiate modes or aspects of collaboration, specific to the environment in which it operates (cf. Bucher 2012, 13). In what follows the empirical findings and discussion will therefore repeatedly alternate to develop the central thesis. GitHub as knowledge-based workspace is both an infrastructure that can be used through local applications such as the command line interface or the native application,27 and a web-based platform that you can visit and explore. For the first perspective we may take a closer look at the core infrastructure underlying the platform in order to discuss how computer-supported innovation, knowledge sharing, and community building can result from this foundation. After that section we can then start considering the wide range of features and possibilities that follow from the specific implementation on GitHub. In the final section of the main part this implementation is revisited in the light of activity transparency and awareness on the platform.

4. A System for Distributed Revision Control and Source Code Management

This section will discuss how Git helps producing and instantiating specific modes of collaboration that are specific to the platform. Git is approached here as technical foundation to support functionality that is discussed subsequent sections. First I will describe Git on the level of its distributed VCS architecture, after that I focus on data storage in particular to discuss modes of collaboration.

4.1. Moving From Centralised to Distributed Version Control Systems

Version control systems play an important role in the software development process (Sink 2011; Chacon 2009). As one of the main project management strategies, source and version control is needed keep to track and structure cooperative software development. Brian de Alwis and Jonathan Silito summarise the key differences between centralised and decentralised systems. According to the authors, decentralised VCSs are succeeding centralised VCSs as the next generation of version control (2009, 36).28 Realising that a change at the core of the development process will affect the workflow, de Alwis and Silito summarise some of the differences between these systems and describe the rationales and perceived benefits offered by projects to justify the transition between them. In a centralised VCS “write-access is generally restricted to a set of known developers or committers” (37, original emphasis). As a second characteristic, they write, “modern VCSs support parallel evolution of a repository’s contents through the use of branches”. The developers thus require access in order to contribute and earn this by submitting quality work. This way, the quality of a project can be secured. Branching is often used to split the mainline branch of development from the smaller challenges during the development. This way, challenges of different scales can be stored and developed at the same time, and at a different pace.

Raymond (2008) refers to DVCSs as a third generation of version control systems and started appearing in the late 1990s and early 2000s (Harper and Kivilinna 2012, 3). Decentralised systems do not require a central master repository, because “each checkout is itself a first-class repository in its own right, a copy containing the complete commit history” (de Alwis and Silito 2009, 37). Each developer, then, is essentially contributing to his own copy of the project, rather than to a shared project. Branching and merging are also used differently in development supported by DVCS: “DVCSs maintain sufficient information to support easy branching and easy, repeated merging of branches. The ease of branching has encouraged a practice called feature branches, where every prospective change is done within a branch and then merged into the mainline, rather than being directly developed against the mainline” (37, original emphasis). The fact that repositories in a DVCS store the complete revision control history is particularly interesting. Because of it, they lend themselves very well to distributed and disconnected development (37). For example, remote locations do not need to have a constantly stable network connection with the central repository, and a change or problem in a single, central repository is not as permanent or dangerous anymore. Every repository is effectively also a backup that reduces the risk of data loss or overwrites involved in such disconnected processes. De Alwis and Silito’s research based on the Perl, OpenOffice, Python, and NetBSD open source projects resulted in five anticipated benefits of DVCS over CVCS: “to provide first-class access to all developers … to support atomic changes … simple automatic merging … improved support for experimental changes … [and] support disconnected operation” (38). For programmers working with these systems on a daily basis, the main challenges seemed to be the transition from one workflow to another, and the compatibility and communication issues that come with it (38–39). Because each clone is a copy of the complete project, there are also legal ramifications to consider for these projects. Once a commit is submitted, every connected developer will have a copy as well. It seems, then, that open source software development and DVCS complement each other in interesting ways and are both fundamental layers of the Git infrastructure (section 5.1.).

Gavin Harper and Jissi Kivilinna discuss DVCSs in the context of open source software development, while also drawing comparisons to traditional CVCSs. The main argument they make is that distributed version control is beneficial to open source software development, but that it is contingent upon project requirements and deployed models of governance (1). Just like de Alwis and Silito they note as well that branching and forking make for curious aspects of DVCSs (4), because each clone of the project is at the same time a fork or branch (Fung et al. 2012). However, they also stress that this is not a typical fork, but rather a kind of “light-forking”: “They argue that historical forks where source code of [a] large project is copied under different governance differ significantly from forks whereby a developer makes a small, or insignificant change, ensuring that the core of the project remains unmodified” (Rigby et al. 2011, qtd. in Kivilinna and Harper, 4). DVCSs also stimulate developers to experiment, because any amount of branches can be tested without making permanent changes to a main branch and thereby risking the project development. The result, according to Kivilinna and Harper, is an ad-hoc workflow and interaction between the developers that consists of simple merges and small operations. The use of feature branches may improve the quality-of-code over traditional development iterations. On the level of community, DVCSs also significantly lower the entry barrier for new contributors, for new members to join the development, and for improving learning opportunities for starting programmers. As a result, write-access does not have to be restricted for reasons of quality control anymore (section 5.1.).

4.2. Storage and Source Code Management in Git

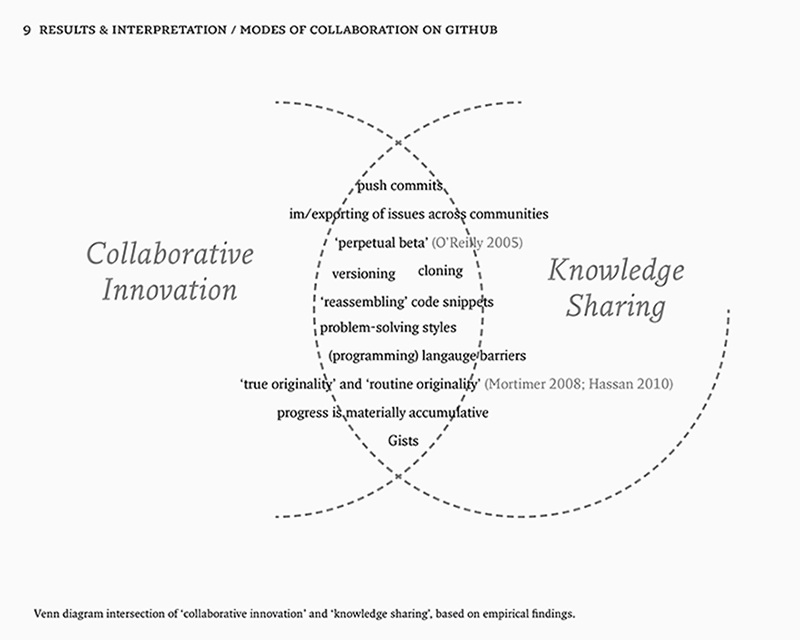

At the core of a version control system like Subversion is the repository, which refers to a data structure that organises the data in a central location.29 In Git, this works in a similar, but slightly different way. In Pro Git, Scott Chacon notes that DVCSs have no central location for storage anymore, and this has several implications. First, nearly every operation is local. Which, in turn, determines the three states that files can reside in: they can be committed, modified or staged (6–7). Respectively, these states correspond with the three main sections of a Git project: the Git repository, the working directory and the staging area (7). Because nearly every operation takes place locally, Git becomes an infrastructure for an environment where individual developers work on projects in a highly disconnected, yet cooperative effort. Obviously, this makes it hard to find and develop projects with complete strangers. The GitHub platform, then, is not so much a hub in the technical sense, but rather a social hub to connect with projects or people and that synchronises individual work into cooperative effort. In that sense, GitHub acts as a matching service. Second, Git has what Chacon calls “integrity” (6). He describes this as follows:

Everything in Git is check-summed before it is stored and is then referred to by that checksum. This means it’s impossible to change the contents of any file or directory without Git knowing about it. This functionality is built into Git at the lowest levels and is integral to its philosophy. (6)

Although this may come across as a technical detail at first, it is essential to understand the branching and merging operations that are so important to collaboration on Git. By integrity, it seems, Chacon points to a philosophical consistency in how Git uses checksums to refer to data in the repository. This referring happens via the index, which organises the data in the repository by pointing to specific parts in it. This way, the repository never has to change, and the index can simply refer to all elements that are required in the working directory. This is what the checksum refers to; the unique sum of hash values (in this case of the required elements for a specific point in the development). Branching or merging simply results in a different checksum that can still refer to the same elements in the repository. The repository, then, is a collection of snapshots that can constantly be reused to form new code assemblages. These assemblages occur when different project networks (not just code, but also the associated community and organisation) converge and become integral to one another (cf. Dodge and Kitchin 2011, 7). If we consider creativity and originality here, this model prefers a model of innovation that is based on combining and reassembling pieces of existing content. This enables “remixing” from small snippets of code to complete features. Third, Git generally only adds data. As long as you work locally in the working directory, you are vulnerable to data loss. But as soon as you commit the files, it is very difficult to lose or remove data (6). A typical workflow in Git starts with modifying files in the working directory, followed by staging them in the form of snapshots, followed by pushing a commit, which permanently stores the staged files in the Git repository (7). Contributions are hard to “forget”, which favors development of software that embodies the logic of a future based on past data.30 Progress is not so much linear as it is materially accumulative. This idea of creating something based on repurposing existing pieces of code supports distributed modes of collaboration that seek to create assemblages. It also raises critical questions whether we should remember everything just because we can.31

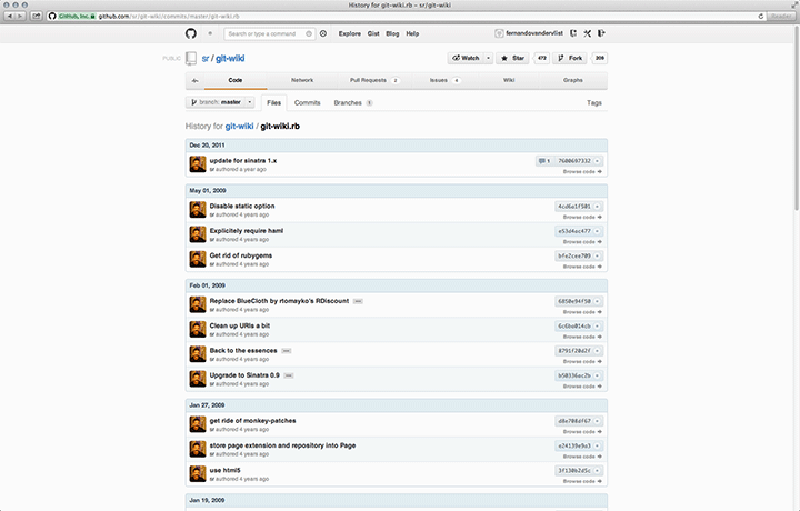

Another major aspect to storage in a DVCS is versioning. Instead of saving changes between files as they change, Git just stores snapshots (Chacon, 5). This has important consequences for the notion of ontology and managing change. Timothy Redmond et al. presented some basic requirements and initial design of a system that manages change to an OWL (Web Ontology Language) ontology in a multi-editor environment (1). Among the requirements they identified for that system is “concurrent editing” (2), which deals with conflicting changes to a project. The interesting thing, however, is that Git doesn't seem to really deal with conflicting changes. Instead of forcing a user to choose between continuing either this version or that version, it just saves them both, suspending the project direction. The outcome is not many dead projects, because projects are sometimes forked, or issues are revisited in discussions months later.32 This tells us that decisions are made and coordinated in a different way. Another requirement the authors identified was “complete change tracking” (2). While discussing it, they point to the need to see the history and evolution of an ontology. Although this certainly seems true, the idea of ontologies is itself problematic. As critically discussed by Florian Cramer, ontologies are both useful and problematic: “they reference what computers, as purely syntactical machines, cannot process, and which can't be mapped into computer data structures except in subjective, diverse, culturally controversial and folksonomic ways” (2007). If that is true, then the idea of managing change in an ontology might be problematic as well. Certainly there are different interpretations of what progress means, and Git only captures one of these interpretations. Git’s idea of progress or innovation seems to be oriented towards endless revisions that are not necessarily advancing towards a common goal. Instead of creating something new, it is often easier to revise something existing. In terms of workflow, this means that what Ian Mortimer calls “true originality” (Mortimer 2008, 16–17; Hassan 2010, 366) is often overshadowed by “routine originality”.

The specific versioning method has become an important point of critique on Git. As identified by de Alwis and Silito (2009, 39), Koc and Tansel (2011), and Harper and Kivilinna (2012, 7), the absence of an “understandable version numbering system” is one of the main disadvantages of distributed systems and results in a less intuitive experience: “due to the potentially unclear methods of tracking modifications including a hash of the changeset or a globally unique identifier as a means of identifying the current state of a software project” (Koc 2011, qtd. in Harper and Kivilinna 2012, 7). This makes it useful to consider how this dictates traceability and versioning of modifications. Harper and Kivilinna continue to stress this: “In situations where there exists a centralised server containing a single repository, all changes made thus represent the singular state of the software project at a given point in time. However, should this be distributed, there does not exist a single coherent version that can be tracked” (7). Working without a single version could effectively mean losing the sense of progress towards a final version (Figure 4.1). This not only makes it harder to coordinate group work; it also becomes problematic to define states in the development of a project, and makes it a lot harder to recruit developers in the first place. The direction, if there is any, constantly changes. If there is no clear direction behind a project, then why should anyone want to spend time and effort on a small or larger project? To answer that, it is necessary to reframe what makes a project in relation to collaboration.

4.3. Nested Assemblages of Code

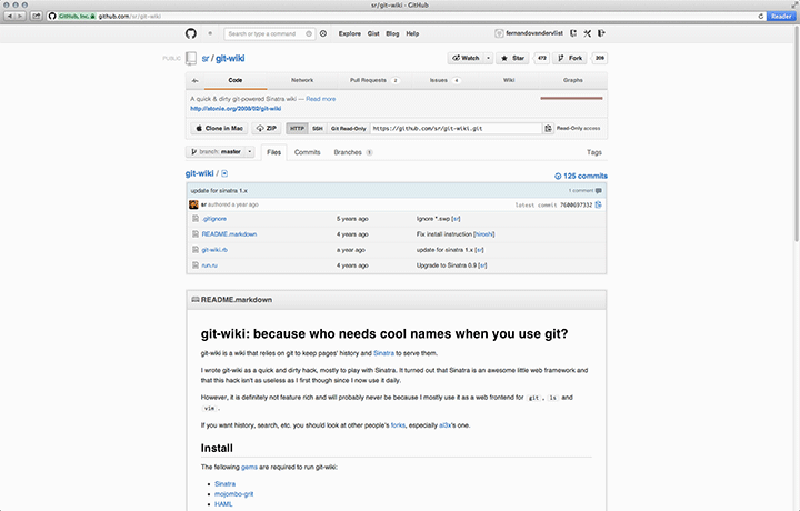

As far as the system is concerned, projects do not exist. There are only repositories consisting of files and directories, records of changes, the commit objects, and the heads that refer to these objects. To create a new project, one can either create a new repository with “$ git init” and add files with “$ git add”, or clone an existing repository with “$ git clone [URL]”. Each repository has a unique resource identifier (the URL), and if the project is on GitHub it has a project page with an overview of activity, issues, and files, including a “README.md” (markdown) file that contains an actual project description (Figure 4.2). For developers, projects may span over multiple repositories.33 Each fork or clone results in a new repository, but may still be part of the same project. A new feature or a version of the software that is compatible with a certain platform is often created as a fork or branch of the main project.34 Another example is the Android project, which started as a fork of Linux and has recently been re-merged with the mainline.35 As separate projects, different distributions developed different solutions to certain issues. This lead to many heated words over what the right solution was to specific issues, or just what thing Android actually was in relation to Linux. What this demonstrates is the “torquing” (Bowker and Star 1999, 190) that happens when different conflicting classification schemes are being merged. It’s not just about merging code; communities, organisations, and ideas are merged as well.

To describe the work-style where each repository evolves independently of the other, Peter Rigby et al. have proposed the notion of “episodic collaboration” (2010). In their words, “[t]eams and individuals work independently and in parallel, and then merge later, leveraging git’s fast and easy merging” (3). For another work-style where repositories are shared they introduced the notion of “continuous collaboration”, which they describe as “[t]he scheduler, and the … networking teams can coordinate work, dividing labor and sharing contributions on a common sub-task via their shared repository”. Both are useful models to think about collaboration in relation to Git. Episodic collaboration, for example, accounts for the modularity of code edits that are committed without any knowledge about the endeavours behind a project; committing just for the sake of committing. This can still be very productive, a bit like dialogue or what Lev Manovich termed “media conversations”. That is, commits become tokens to initiate or maintain a conversation (2009, 326). On the other hand, continuous collaboration becomes useful when thinking about teamwork and labour division among developers. Maybe the former cannot be counted on, but might be useful for solving difficult issues or initiating new directions, while the latter can be planned and coordinated much more efficiently.36

5. The Practice of Collaboration in an Open Source Software Repository

In the previous section I have shown that the specificities of Git has resulted in ad-hoc workflows, and a particular understanding of projects as nested code assemblages that also contain communities and organisations which are relative to that code. This resulted in two general modes of collaboration that coexist in the same space. It was necessary to discuss Git because it is one of the ways that GitHub is used as infrastructure, but also because it forms the foundation for GitHub as a web-based platform. Continuing this exploration we can now start addressing GitHub. From here on, I look at GitHub as a socio-technical construct to understand how certain features augment or serve to deal with particular strengths and shortcomings of Git, and relate this to the implications for collaboration.

5.1. A Reconfiguration of the Public and the Private

One of the things that the open source philosophy promotes is free distribution and access to source code and implementation details (Stallman 1985). This seems opposite to the notion of ownership. N. Katherine Hayles argues that “[t]he shift of emphasis from ownership to access is another manifestation of the underlying transition from presence/absence to pattern/randomness” (1993, 84). Hayles wrote this in the context of what she called the disembodiment of information. When software is not a package deal, but constantly in development, it also loses some of its materiality. The question of accessibility becomes a question of permissions that govern levels of availability and influence. The Help pages on this topic explain these permission protocols: “user accounts and organisation accounts have different permissions models for the repositories owned by the account”.37 User accounts have two permission levels; the account owner has full control of the account and the private repositories owned by the user. Collaborators have push/pull access. As there are only two permission levels here, there is not much room for protocological hierarchy in the development. Hierarchy on GitHub follows from a different set of socio-technical factors (section 6.1.). Organisation accounts have more levels of access to organise account teams. A distinction is made between owners, admin teams, push teams, and all teams (pull, push, admin and owners). Axes of permission (parameters) are repository access, team access and access to organisation account settings. To summarise the permissions briefly: the owner can do everything; the admin teams cannot access account settings and cannot add or remove users to the repositories they admin; push teams can push (i.e. write) to the repositories, but cannot access account settings and can only view team members and owners; and all teams can pull (i.e. read) from the repositories, fork, send requests, open issues, edit or delete commits and comments, and edit wikis. In this environment, hierarchy is about trusting someone with the permission to have influence. Ownership, in other words, is still there, but is layered into levels and assumes a different form. The owner is not so much a possessor of property as a maintainer or coordinator of a process. Ownership and direction are intertwined, and the owner is also the lead developer.38 This is because at the other end, the default right of new users is still quite large. Any user can suggest a new direction and fork the complete project, which makes that user the owner of the exact same data, but not of the same community supporting it.

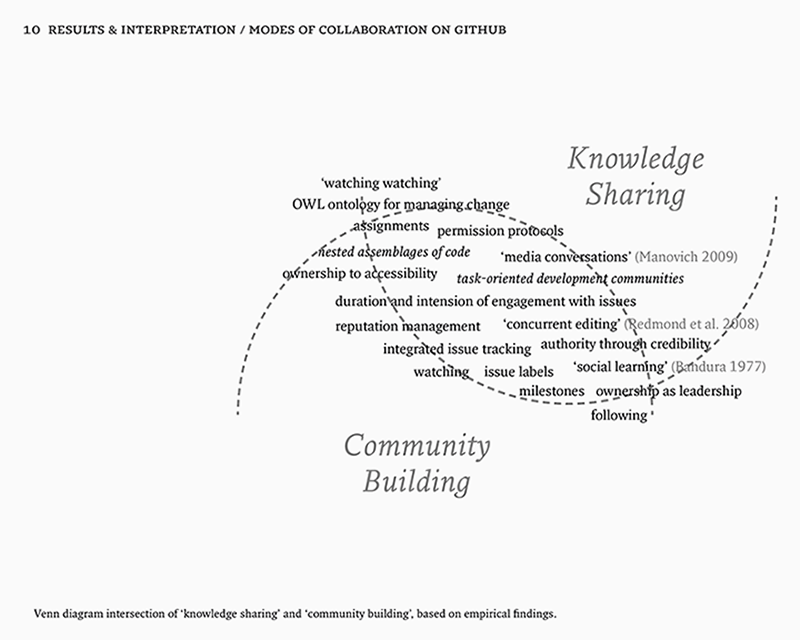

But then how is consensus reached among the developers? Hayles observed that a shift of emphasis from ownership to access also results in a reconfiguration of the distinction between private and public (Hayles 1993, 84). GitHub offers private and public repositories, and by paying a monthly amount of money, developers can keep their projects private39. By default, a free repository is public. In this particular dichotomy between private and public, transparency of information is less scarce than information secrecy. There is an inherent risk of privacy, and extra efforts are required in the form of security and general cautiousness.40 In the public section of the platform there is a different political architecture, one that seems to be more specific to the Web. Jodi Dean proposed “neodemocracies” as an alternative to the public sphere (2003). Instead of the nation, she considers the Web as “zero-institution” (i.e. as empty signifier that signifies the presence of meaning) where consensus is replaced by contestation; actors by issues; procedures by networked conflict, inclusivity by duration, equality by hegemony, transparency by decisiveness, and rationality by credibility (108). This “communicative capitalism” is a useful step towards thinking about publicness on platforms. In this model, it is key to focus on issues, not actors as vehicles (110). Inclusiveness is not scarce anymore, because everyone has a certain degree of access. What matters is duration, or the prioritization of interest and engagement with an issue, rather than inclusion for its own sake (109). Decisiveness replaces transparency, because power is not hidden and secret anymore, and what matters is decisive action. When communities gravitate towards issues or projects on GitHub, it is not about rational debate. Consensus is not reached through reasoning, but through quality and credibility behind the commit, which increases reputation. Credibility replaces rationality. This perspective calls for a better understanding of GitHub as an environment where commitment to issues, social status, and reputation management matter. This makes issue-oriented coordination (section 5.2.), task-oriented development (section 5.3.), and activity capturing (section 6.) sites of further inquiry.

5.2. Issue-Oriented Coordination

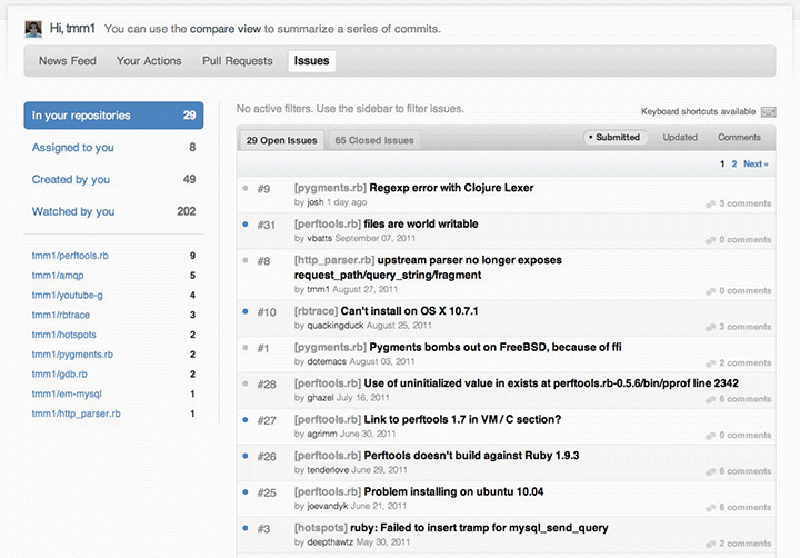

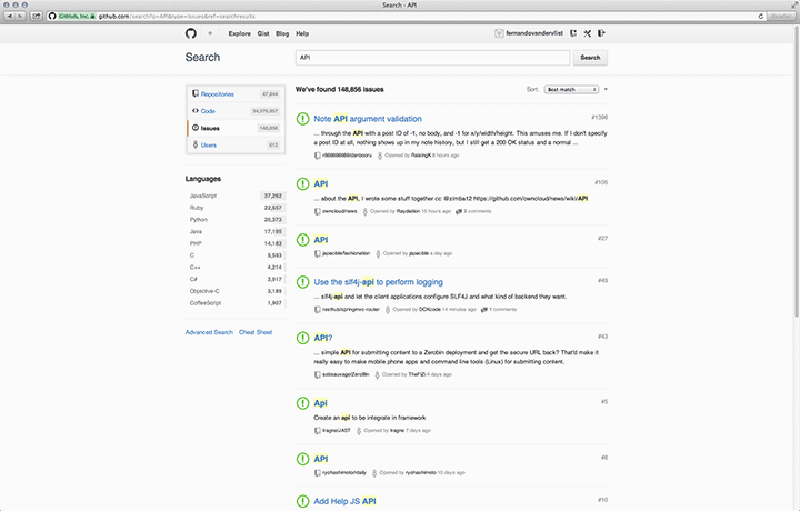

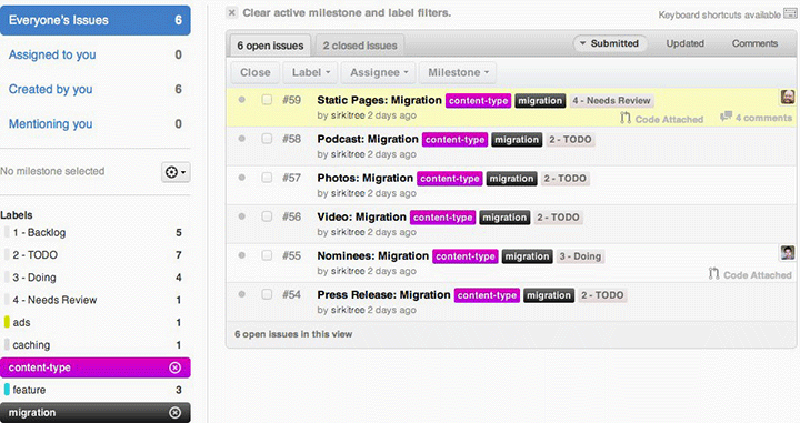

On GitHub, every user can create and view issues on public repositories.41 Introduced in April 200942, an integrated issue tracking system supposedly lets you deal with issues “just like you deal with email (fast, JavaScript interface),”43 and allows users to categorise, apply labels to these issues or assign them to someone. Additionally, users can also vote on issues that you want to see tackled, and search or filter for them specifically. In April 2011 they launched the 2.0 follow-up version.44 As units of coordination, issues are allocated as tasks and managed via the Issue Dashboard45, which looks similar to an email inbox. An issue has a “gravitational core”, drawing developers towards a project. Issue Tracking is about managing these issues, but also about integrating the “hooks”46 they provide in other features such as Search (Figure 5.1).47

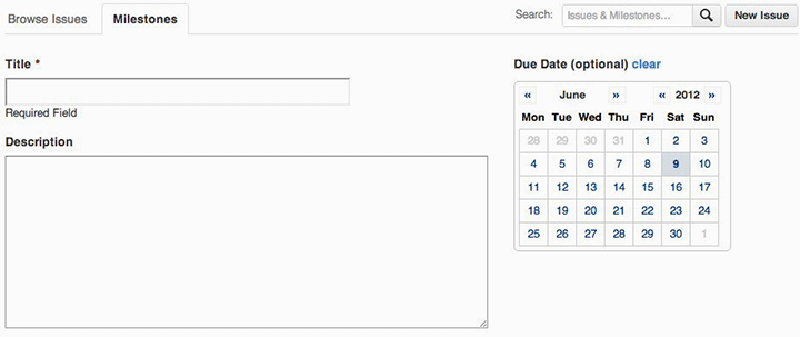

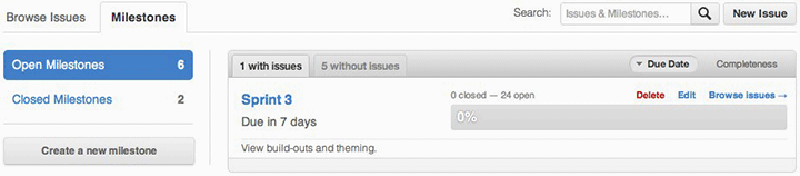

Three features complement each other and make that issue tracking is integrated into the platform. First, with the assignment feature a particular developer can be assigned to work on a task. Assignment is useful because it is “captured” (Agre [1994] 2003) and made visible to other developers as well, which prevents developers from working on the same things, and gives confidence in the project’s progress. Second, progress is measured with so-called milestones. Milestones enable the grouping of issues and focusing your issues within time blocks; indicating fixed points on the timeline of a project or issue (Figure 5.2). Third, issue labels are a simple and powerful addition that lets you add metadata to an issue. To help users on their way in adding useful labels, GitHub suggests some default labels such as “bug, duplicate, enhancement, invalid, question, and won't fix” (Bitner 2012) (Figure 5.3). These labels seem to result in a mixture of actual issue descriptions such as “bug” and issue statuses such as “won't fix”. Labels are not only useful as organising mechanism, but also enable folksonomy-based filter and search mechanisms. Behind each of these features, the notion of the “perpetual beta” (O’Reilly 2005) appears as an implication for collaboration on GitHub. Perpetual beta replaces the software release cycle in favor of the open-source maxim to “release early and often, delegate everything you can, [and] be open to the point of promiscuity” (Raymond 1998). There are only versions, which are themselves assemblages of other versions, coordinated through issues. As Jim H. Morris notes, interaction between users and servers go over the net, so they can be recorded and replayed, which can be used for much more effective bug analysis and performance measuring (2006). It also has consequences for the way features are implemented. They are not distributed as updates, rather it is a continuous feedback loop where features are implemented in rudimentary form and tested by the users. Users thus become passive co-developers.

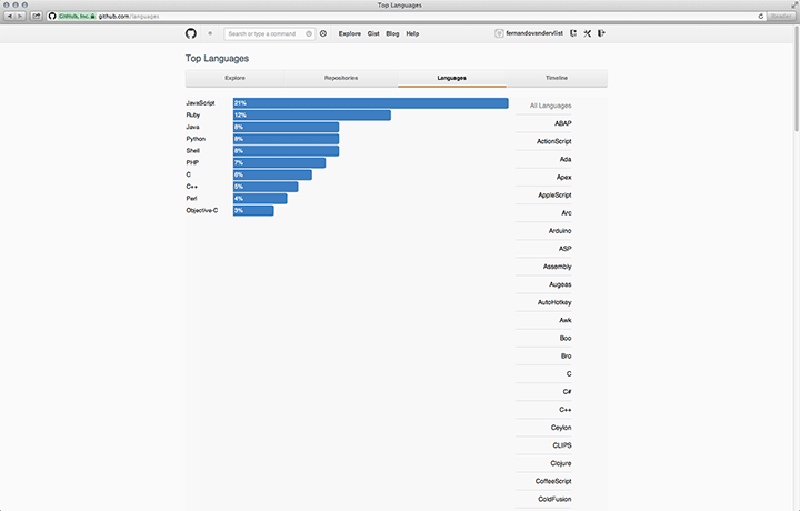

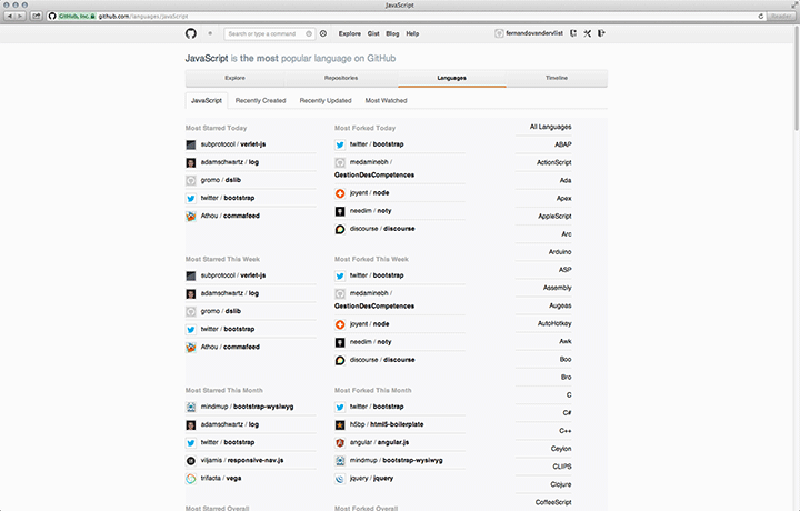

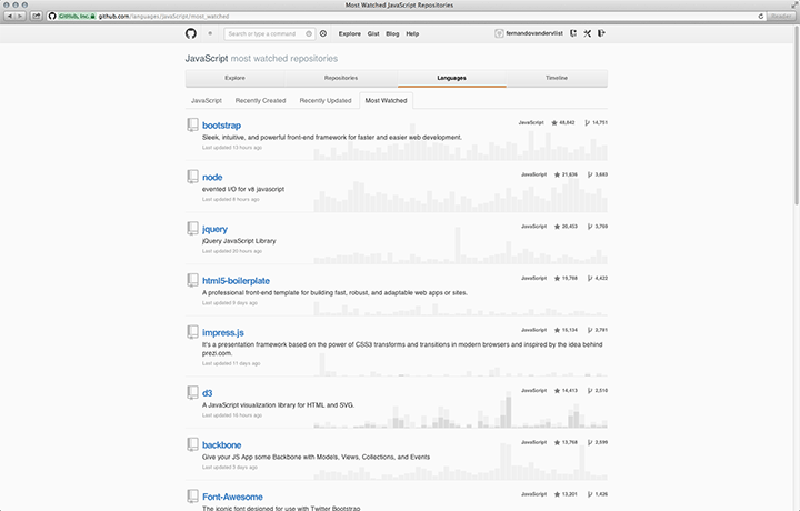

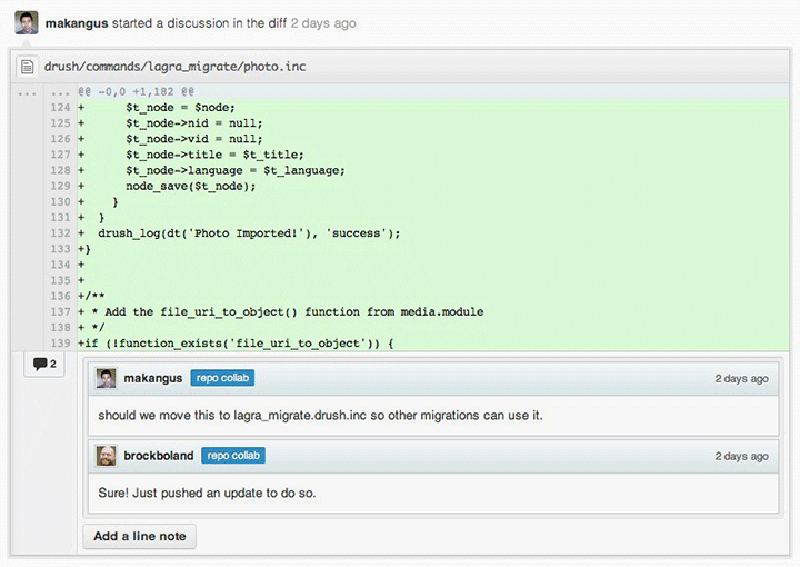

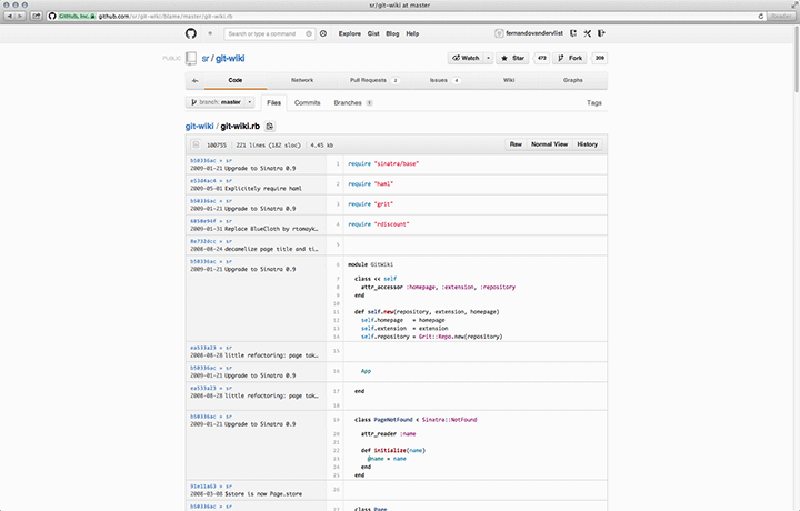

A consequence of issue-oriented coordination is the integration of code reviewing48 into the default workflow. As in any development process, reviewing your own work or the work of other peers is an essential part of the process that both quality and legitimacy often depend on. When source code can be publicly distributed and modified this can create an environment that supports constructive criticism. Assuming that in a typical collaboration not all developers will be professionally trained software engineers, collaborative development will be interdisciplinary in essence. This means that it is also necessary to keep in mind that, to a certain extent, teams become dependent on a number of shared, established concepts and skills. Source code scrutiny assumes a certain level of code literacy. In his introduction to Software Studies: A Lexicon – a seminal work that tries to establish a common vocabulary that binds thinking and acting together within a professional community (2008, 9) – Matthew Fuller identified this required skill as well. Caroline Bassett paraphrases this: “Fuller argues that the capacity to engage with code-based production and to engage with technicity of software through the “tools of realist description” requires certain technical skills, alongside skills that come from disciplines at some distance from computer science and the contained project of realised instrumentality it prioritises” (2012, 122). Bernhard Rieder and Theo Röhle also linked code literacy specifically to source code scrutiny: “[the] possibilities for critique and scrutiny are related to technological skill: even if specifications and source code are accessible, who can actually make sense of them?” (2012, 76). In order to review code, then, one would first need to learn how to both read and speak code. Moreover, it becomes even more complicated when we consider the amount of different languages used on GitHub.49 Although with 21% of the projects written in JavaScript, there currently seems to be a preference for this programming language.50 However, this is also the exception. Most languages are only used by a tiny group of collaborators, resulting in language barriers. For this reason, there is an overview51 for each language that shows a number of metrics on projects and the developers about how particular languages have recently been used (Figure 5.4). On top of different language preferences, programmers also use different vocabularies, naming conventions, or solutions to get something working.52 There is never just one solution to solve any problem, which is exactly why computer programming53 can be a deeply creative act and why the style of solution is important as well.54

5.3. Task-Oriented Development Communities

“Social learning” (Bandura 1977) is an important consequence of knowledge sharing and community building on GitHub. Developers can collaborate with a shared repository or with Pull Requests.55 This fork and pull model of collaboration allows for independent work by pushing changes, without upfront coordination, and without requiring access be granted to the source repository. Less commitment is required, reducing friction for new contributors. Jerad Bitner notes:

Pull requests in general are great means of peer review and have helped to keep the quality of code up to everyone’s standards. There’s a bit of overhead in that it may take a little longer for some new piece of code to be merged in, so plan accordingly. But this also means we find bugs sooner, typically before they're actually introduced into the master branch of the code. (2012)

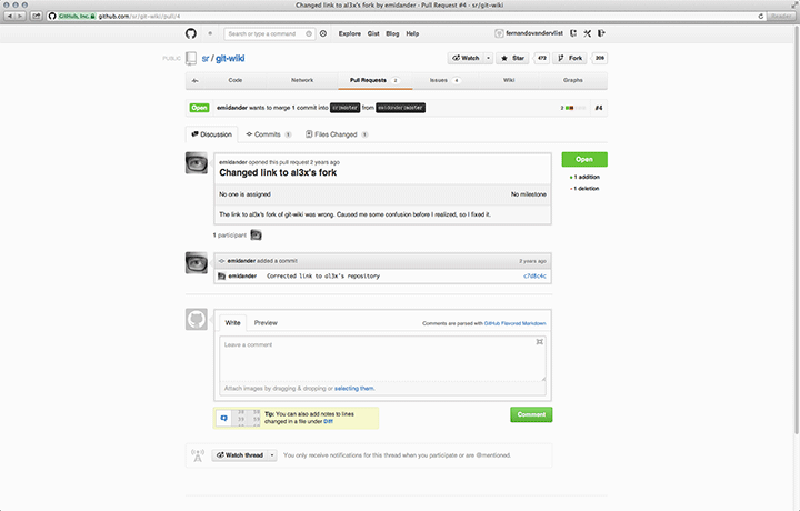

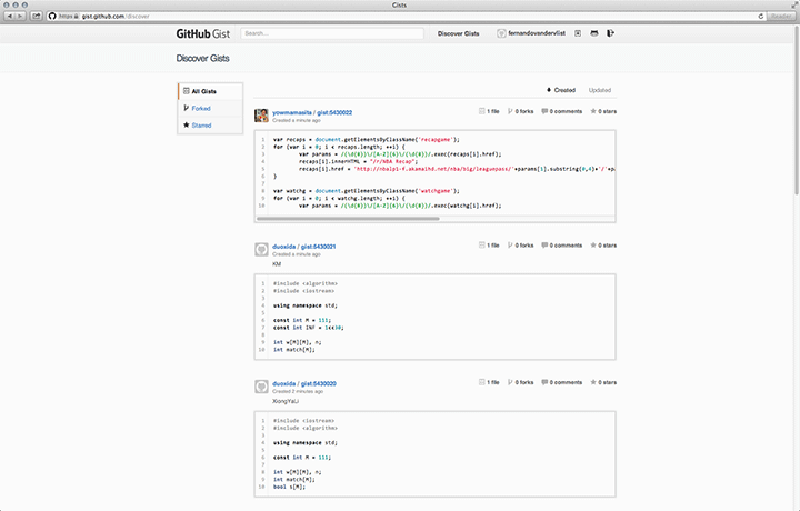

By either forking or branching, developers can suggest modifications to the existing code, or contribute new code. The project maintainer then decides which suggestions will be implemented. Four features work together to enable commenting and discussion. First, in-line commenting keeps everyone participating in the project in the loop with changes that are happening. This allows for intervention on the issue-level, which is an important aspect in terms of involvement and influence.56 Second, commit comments can be used to annotate commits with a comment. These comments also show up in activity feeds and each repository has its own comment feed.57 This makes it possible to communicate in a clear and direct way if there are questions or comments on a particular commit. Informal annotation thus becomes part of the process (Figure 5.5). Third, Gist offers a quick way to share snippets of code with others, and one great advantage is that each gist is also a fully forkable git repository.58 Considering the workflow of a typical programmer, where code is often scraped from a wide variety of places and recombined into something new, this is an important addition to the toolkit. Snippets can easily lead to informal dialogue about objects (Figure 5.6). Fourth, the blame feature shows you who are responsible for changing each line in any file of a project.59 This is not so much a feature to blame a co-developer for doing something wrong, as it is a resource (Figure 5.7). It also brings to the fore that every action on GitHub is publicly attributed to a user profile (section 6.1.). This kind of knowledge sharing directly affects community building. When developers find solutions to problems, they often start watching these (Dabbish et al. 2012). They receive updates on the progress and changes as they are made and learn about how others seek solutions to their problems, resulting in “development neighbourhoods”60 around issues.

In an empirical study on social forking in open source software development, Fung et al. (2012) show that forking – the creation of a new, independent repository by cloning the artifacts from another project (Bauwens 2005) – is poorly understood as a practice in research, because it is often assumed to be damaging to the open source community.61 According to Jon Loeliger, forking could either reinvigorate or revitalise a project, or it could simply contribute to strife and confusion on a development effort (2009, 231). By looking into nine JavaScript programming communities on GitHub, the empirical study by Fung et al. showed that: “forking was actively used by community participants for tasks such as fixing defects and creating innovations. “Forks of forks” were also utilised to form sub-communities within which specific aspects of an OSS product line were nurtured” (2012). Especially interesting here is the notion of “forking a fork”, which constitutes a secondary-level, or even tertiary-level fork and indicates a recursive division of issues into ever smaller units. Another interesting observation is that sub-communities are formed and move along with these forked issues. Ultimately, forking is part of the set of features that primarily aim to direct innovation. Instead of submitting commits to a project in development, forking means creating your own independent path. It follows from having an idea about the project that is somehow different from the views behind the initial project. Once forked, the project is treated like any other (it is the default workflow), and as such it can be watched or followed by new users that think alike. Administrative rights (and credit attribution with it) for the fork remain with the user that has created the initial fork. On the social level, this creates an environment where developers gain reputation by having adopted new and interesting projects. Users with a higher reputation according to the system will also have a greater influence in the diffusion of innovations (Rogers 1962).

Forking produces specific modes of collaboration. To illustrate this, the case of what Jesús M. González-Barahona termed “light forks”62 is useful. According to Rigby et al.: “In contrast to a fork, a light fork exists when a developer makes specific changes to part of the system, but keeps the core or most of the code the same. An example of this style of fork is a Linux kernel modified for handheld devices” (2010, 28–29, original emphasis). This emergence of light-forking is a direct consequence of a particular mode of collaboration that is enabled through that feature. In the case of the Linux kernel,63 for example, there are regular updates in different parts of the tree.64 The authors note that these changes are usually only accepted when they are not too specialised or narrowly focused, which is why the developers must maintain their own light-fork (28). Light-forks combine the ability to keep changes in code in version control, while at the same time staying up to date by pulling changes from the remote repository as they occur (29). Light-forks are a solution to prevent problems of messy merging processes, but also constitute a unique mode of collaborating that is both continuously up-to-date – as it maintains its linkages to the remote – and is at the same time completely yours to work with – as a separate repository. Still, however, the basic implications for innovation, knowledge sharing, and community building remain the same. Fung et al. make a distinction between two layers of abstraction on which forking is utilised: endogeneous and exogeneous. “[The former] occurs within a community of social developers who work on forks for the same software product line … [and the latter] refers to the creation of forks accross communities of social developers” (2012). This distinction shows how forking is linked to community building and knowledge sharing. In fact, one could argue that communities are continuously reassembled because the issues (or forks) they are connected through are continuously imported and exported between different communities. This community merging is interesting to consider as it occurs simultaneously with the merging of code artefacts. In organisations, when such a merger would occur, there are huge consequences and it might take years before a large merge is completed. On GitHub this is not the case, which shows again that an issue-oriented coordination is much more flexible, which (not coincidentally) is also one of the pillars of any good distributed system (Tanenbaum and van Steen 2007).

6. Information Transparency and Activity Awareness on GitHub

In the previous section I have argued how the open source philosophy initiates conditions for collaboration on GitHub. Questions of ownership have been rephrased as matters of permission and access, which has caused a reconfiguration of the public and private. In this reconfiguration, issues are the vehicles that guide collaboration, which is reflected in the integration of issue tracking. Communities become task-oriented, which illustrates that collaboration is coded or programmed. When working in the same physical space, there is always a level of awareness of each other’s activities. On GitHub, awareness systems attempt to recreate that awareness by providing all collaborators with the same mutual knowledge (Gross et al. 2005). Awareness systems such as notification systems and activity streams coordinate and synchronise task-oriented collaborative activity. In this section I look at the system as a whole to better understand information transparency and activity awareness on GitHub. The whole is not just the sum of its parts. More is different, because behaviours emerge as a result of a wide array of actions woven together into a global system.

6.1. Grammars of Collaboration

The dominant paradigm to achieving activity awareness is to create an environment of information transparency (Dabbish et al. 2012; Redmiles et al. 2007; Gross et al. 2005; Sarma et al. 2001). Activity awareness on GitHub is no exception. Philip E. Agre introduced the concept of “capture” as an alternative to the surveillance model of privacy issues. For Agre, these models are cultural phenomena. Capture is a set of metaphors that has different components, which he contrasts point-by-point with the surveillance model ([1994] 2003, 743–744). The surveillance model has its origin in political theory, while “capture” has roots in the practical application of computer systems (e.g. tracking, tracing) (744). Tracking systems keep track of significant changes in an entity’s state (742). We see these on GitHub as well, for instance in the tracing of milestones, forks, branches, and top programming languages. Tracing and attribution of activity occur on every level, which makes capture useful as a structural model to understand how activity awareness is achieved through information transparency.

Grammars of action are central to this model, for they specify “a set of unitary actions … [that] also specifies certain means by which actions might be compounded” (746). Using examples such as organisational coordination and knowledge representation in AI systems, Agre shows that they are not about specific sequences of input and output, but rather about structuring human action: “The capture model describes the situation that results when grammars of action are imposed upon human activities, and when the newly reorganised activities are represented by computers in real time” (746). Agre then divides the model in five stages that constitute a closed loop: analysis, articulation, imposition, instrumentation, and elaboration. In the elaboration stage, the captured activity can be stored, inspected, audited or merged with other records and is thus subject to analysis again, reinvigorating the cycle (746–747). As a set of linguistic metaphors, capture is not about control or what is visible on the screen, but rather constitutes the rules of the game – the grammar we are allowed to collaborate through.65 Worth noting is that these grammars do not need to be externally implemented, because action is impossible beyond this grammar. Although challenged66, it is useful to consider the capture model – and grammars of collaboration with it – for it shows that, from a system point-of-view, the local is connected to the global as two sides of the same coin. The local is found in the global, just as the global is found in the local.

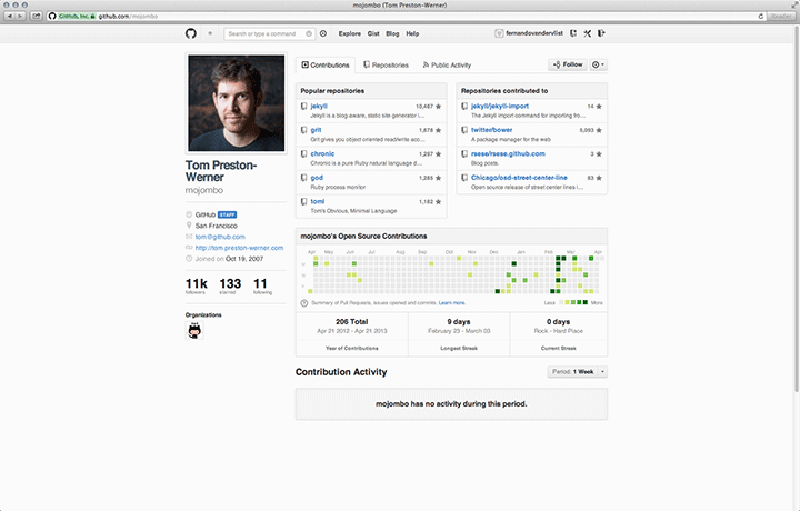

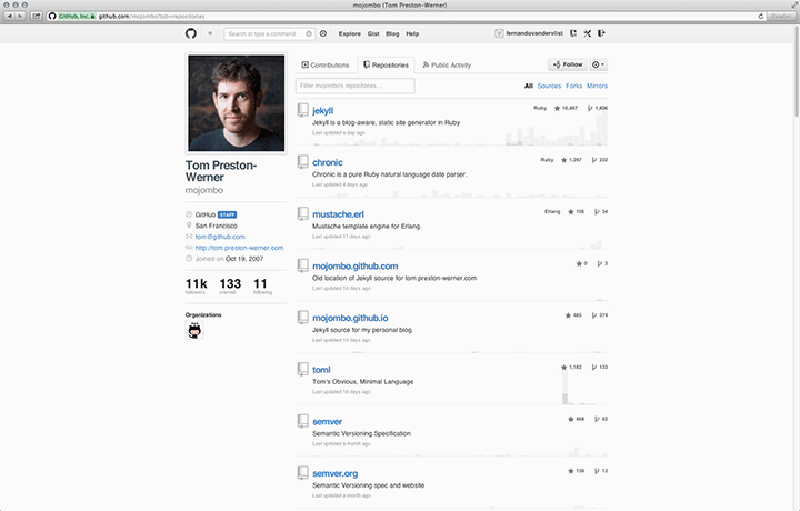

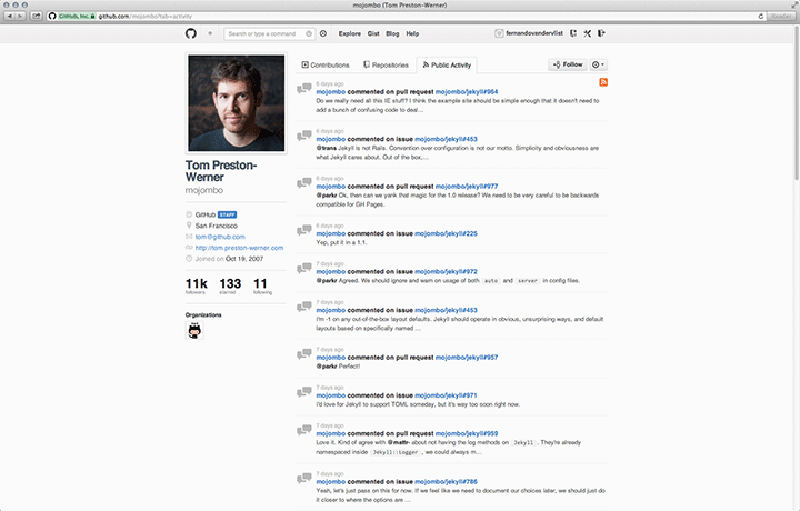

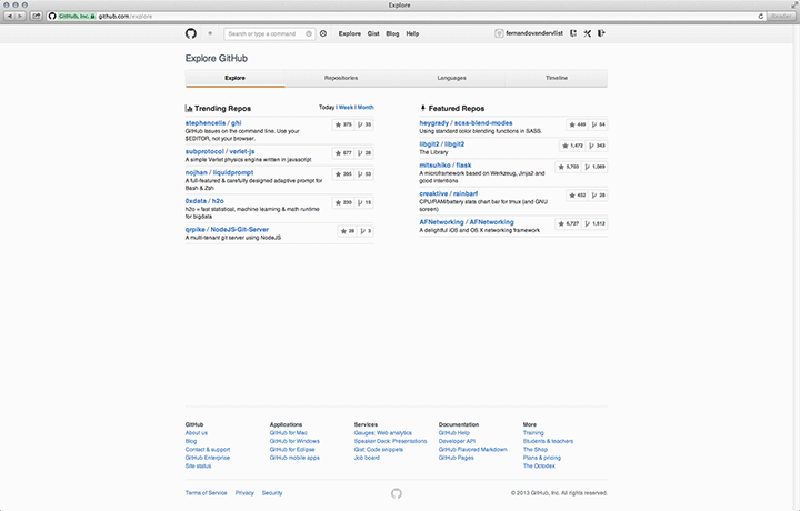

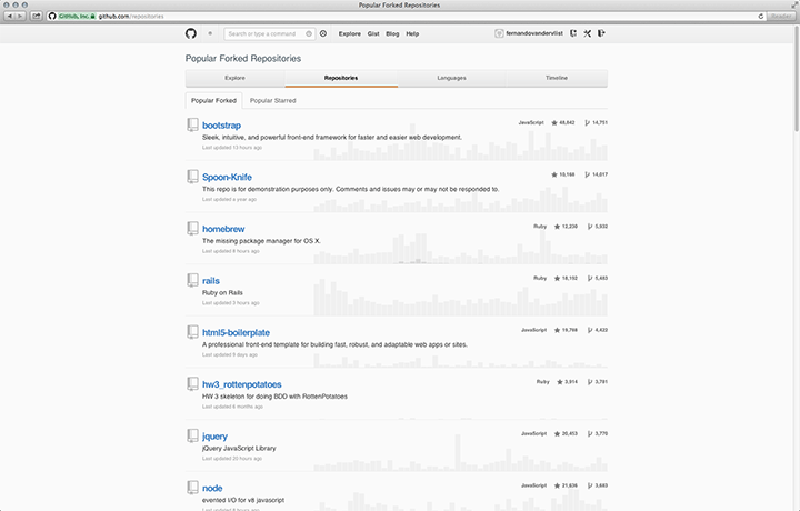

User profiles are the nerve centres or hubs of activity streams. They show us that each of the pieces of data published is also being captured and processed. In order to track the activity of a developer, there needs to be a certain unique identifier. On GitHub this identifier is coupled with a unique username, to which an account is linked. You cannot collaborate if you are not logged in with the right password. A user profile, then, represents the activity of that, and only that, unique user account (Figure 6.1). Although it may seem obvious, it is important for tracking as well as attribution. All user activity is captured and processed by the system. Such information is needed to create an information-transparent environment, which creates a sense of continuous awareness among developers that follow each other. To illustrate, consider forking again. Information about forking is used to rank projects in trending or featured repositories, or popular forked repositories. As a programmed action – where the action is part of a programmed sequence or algorithm – this data can be analysed and returned as feedback to the user. It is because the actions are captured that they may also be used to publish what has been updated, which in turn can be analysed by the system or its users to infer relevancy (Figure 6.2). As a second example, the activity visualisations on the user’s Open Source Contributions are generated automatically by analysing the quantity of recent activity and returning it as public information afterward.67 This information specifically entails Pull Requests, issues opened, and commits.68 Counting is treated differently than receiving credit. Contributions are counted only if they land in the master branch of the upstream. As long as you are contributing to the master branch in your own fork, it does not count. Credit is received when you open an issue, propose a pull request, or author a commit. Credited or not, all activity will still be part of the public activity feed. As long as the “what”, “when” and “where” are tracked, nothing has to go unnoticed (cf. Lessig 2006, 38–60). This means that collaboration becomes regulable, and because contributions are authenticated (i.e. linked to an identity) the system harnesses complex social values such as status and reputation.

This regulability thus leads to a second major consequence of information transparency, which could be called recursive awareness. By this I mean the social system constituted by a repeated transparency in a self-similar way that instantiates complex social value systems such as reputation management, and is constituted through ranking, starring, watching watching, member recruiting, and so on. It’s easy to underestimate the value of awareness, but as Dan Ariely persuasively argued, people tend to be motivated by a sense of purpose and recognition of their efforts (2012).69 According to Dabbish et al. there is a sense of “being onstage':

Many of the heavy users of GitHub expressed a clear awareness of the audiences for their actions. This awareness influenced how they behaved and constructed their actions, for example, making changes less frequently, because they knew that “everyone is watching” and could “see my changes as soon as I make them”. (1284–1285)

The authors were also able to identify that watching was inferred as a signal of project quality, because they would assume that if many people track the progress of that project, it is most likely to be good. It is, in other words, a sign of approval from the community. Self-promotion also follows from this effect of being aware of the awareness of others. GitHub profiles are not just profiles; for recruiters they become resumes as well, because they provide a detailed overview of someone’s investment and quality of his work (Dabbish et al. 1282; Begel 2010, 34).70 There is a certain kind of stability when the overview (read resume) tracks and refers to all your activity on GitHub, which is activity that GitHub has accumulated over time and is contributing to the very projects it stores.71 Such stability is possible, then, because information transparency, and awareness with it, is recursively interwoven into a network of captured activity.

This specific programmed informational environment also instantiates what Dabbish et al. called watching watching. They observed that some respected developers acted as a kind of “curators of the GitHub project space”. Some people tend to have a similar taste in projects, which makes it useful to watch what others are watching. This is also the case when a certain developer works a lot on specific issues, consequently becoming an expert in that category of issues. As mentioned earlier, it is a programmer’s habit to use the knowledge and code that others have developed before them (e.g. copying snippets of code, forking complete projects), resulting in nested episodic collaborations.

6.2. Tracing the Network and Participation Graphs

Metrics are units of measurement that can be used to quantitatively assess a user, process, or event. As such they provide all kinds of information about the system and its use, which, among other things, gives designers of such systems an empirical foundation to improve the system (Kan 2002). There is a continuous feedback loop between design and usage (e.g. perpetual beta). As input, metrics establish a connection with the physical world, because systems can remember, predict, and adapt.

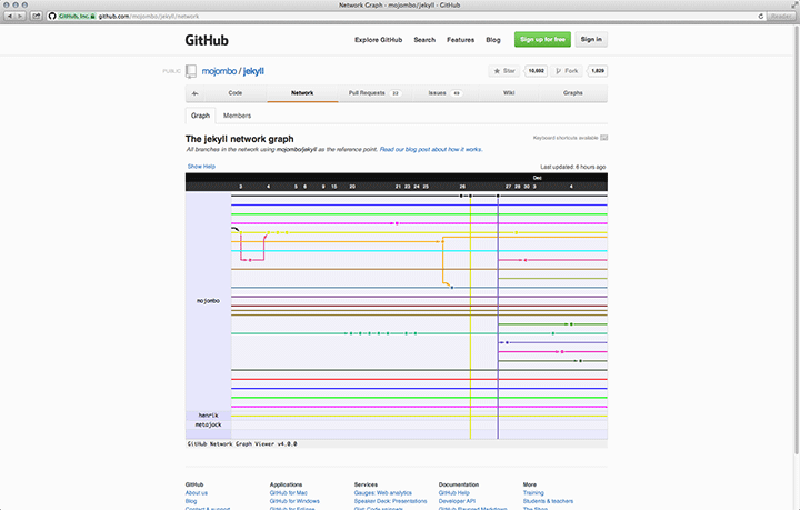

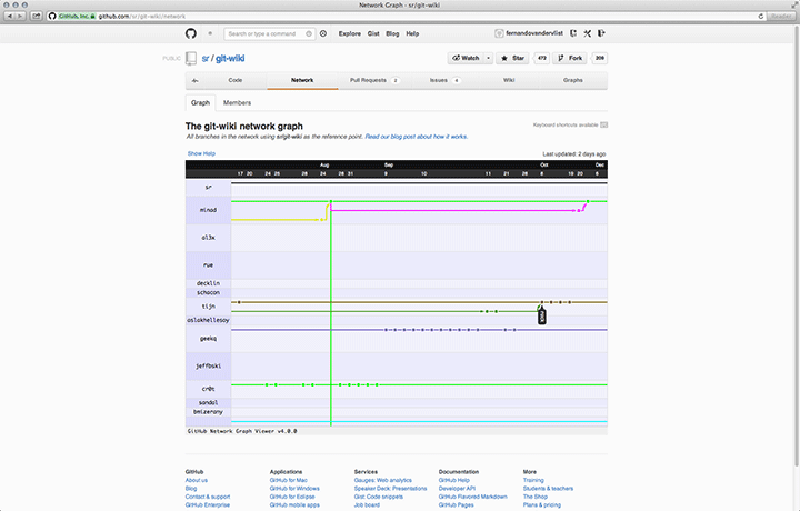

The Network Graph Visualizer72 is a feature that helps collaborators to keep track of what other collaborators have done.73 It automatically visualises the project tree into a schematic representation where a timeline with dates is plotted against usernames (Figure 6.3). When we relate this to the capture model it means that commits, branching and connected activity are captured and returned to the user in visual form. Along the way, certain assumptions are made regarding the question of what will be useful to the developers for the purpose of coordinating collaborative work. The algorithm visualises all commits, the branches, and the repositories that belong to the network. As Tom Preston-Werner notes, this method concentrates on code and not on ego74, which is to say that only contributions are shown that are not yet merged with your version of the project. In other words, it shows only activity that is relevant for the purpose of coordinating parallel lines of development. Another, perhaps less obvious assumption, is the notion that the newest commit is usually the most relevant one for a developer. By default you only see the last month or so, and to see more than that you will have to drag all the way back in time. This obviously makes it harder to find and continue working on contributions from a while back (there seem to be loose ends everywhere), reinforcing the issue-oriented mode of collaboration. In that case it makes sense to work on the newest version, because newer commits will probably have solved (part of) the issue. On the other hand, when the issue is solved, it instantly loses its relevance. Perfectly compatible with the notion of the black box: as long as it works, we should not have to think about it anymore. Is software development really only about making things work?75 On the level of visualisation, this assumption is also connected to western metaphors of time as a linear, sequential stream of events that “just takes us along”. Its horizontal, left-to-right orientation is typical of western thought (cf. Leong 2010).76

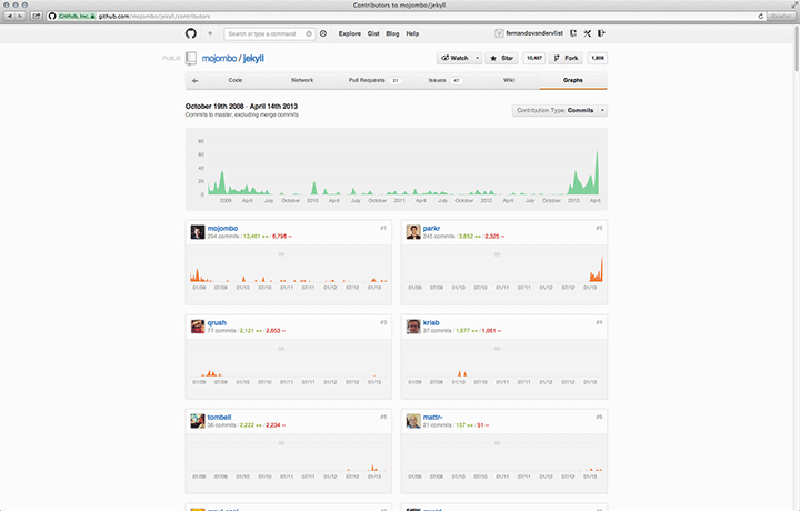

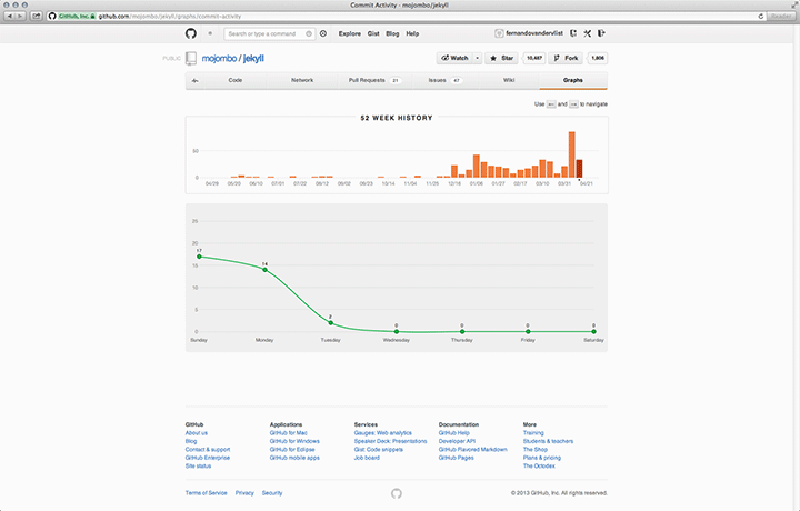

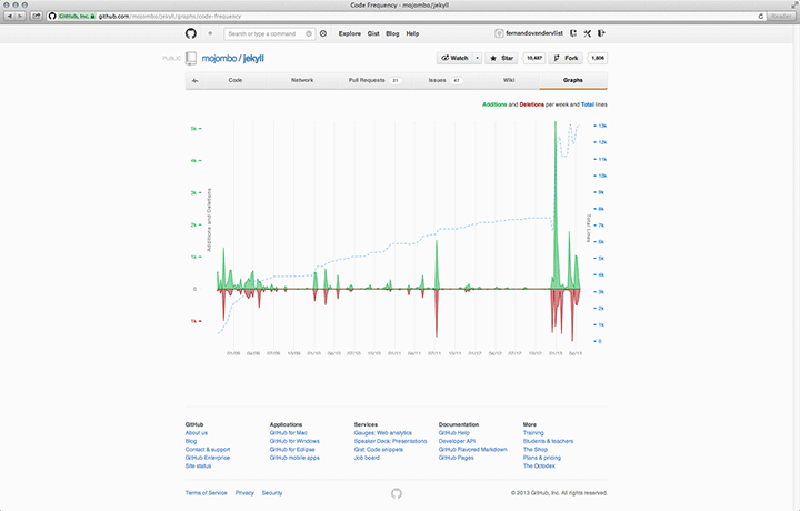

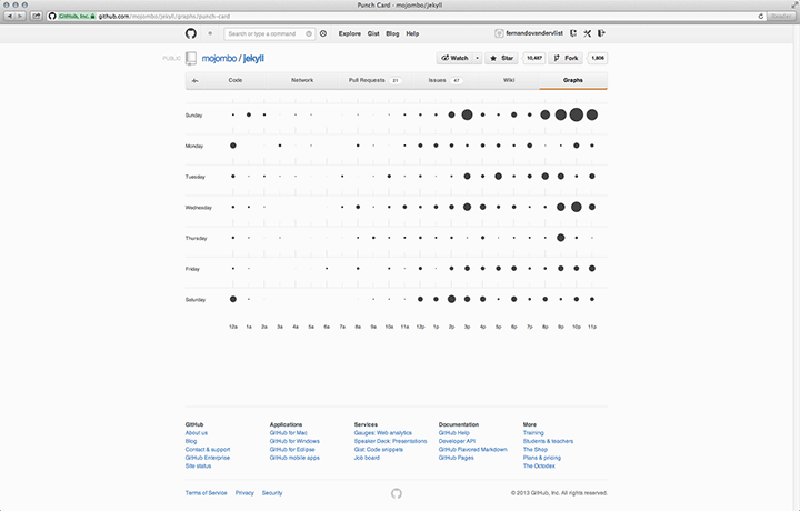

In addition to the network graph, several other computational graphs are implemented on GitHub as well. These other visualisations can be used to discover who is contributing to a project (Contributions graph), commit activity over time (Commit Activity graph), or additions and deletions during the project’s lifespan (Code Frequency, and Punch Card graphs).77 This set of graphs comes with each individual repository, and as such only covers metrics that are relevant to collaboration on that repository.78 All of these visualisations are based on metrics of user participation. In the Contributors graph we get to see who has contributed, when they committed to the master branch, their number of commits (for which they are assigned ranks), and when peaks in activity occurred (Figure 6.4). In the Commits graph the metrics are anonymised, highlighting patterns in quantity of commits over the course of a year – not quality or size of these commits (Figure 6.5). The Code Frequency graph shows additions and deletions in code quantified as the number of lines of code (Figure 6.6). And finally the Punch Card graph gives a zoomed-in account of the number of commits for each hour of the week (Figure 6.7). The graphs help users to read participation as patterns of activity, indicating to which extent that project is engaged with. It is because these graphs show the larger shifts and dynamics within a project that developers can infer a sense of legitimacy (i.e. communal approval) from them.

Each of these graphs is continuously updated with new data, showing that the local and global (or human and system) are continuously mirrored back to each other. The input is captured on the local level, but because it is updating in real-time, it is at the same time mirrored in the form of a visualisation; as part of the pattern. Individual contributions are always a fragment of the whole. Mirroring is used as a metaphor here, suggesting both simultaneity and difference, but also self-reflexivity. Looking into a mirror may be confronting, because it shows you things you would not see otherwise. Someone else would have to tell you. Mirroring, as opposed to tracing, may be a more appropriate metaphor, then, because synchronisation happens instantly. In this environment you do not need this other person to tell you anymore; you instantly “hear back” from the whole community over the whole lifespan of the project.

6.3. Complementary Approaches

Now that so many open source projects are being traced and developed in the same technical environment, it allows for new insights into the collaborative software development process (Heller et al. 2011; Mockus 2009). In these cases visualisation techniques could be used to locate patterns in large datasets, which could then be the starting point for further research into the emergence of such patterns. As I have tried to argue, there is a complex interplay between human action and the various systems on GitHub. Software has the ability to produce and instantiate modes of collaboration that are specific to the environment in which that software is implemented. This means that digital ethnographic research (Murthy 2008) and other ethnographic approaches79 could be a useful addition to understand how the system is adopted on the micro-scale; that is a situated account of how developers on particular projects and in specific collaborative networks use the platform as infrastructure, struggle with conflicts, or solve issues within the limitations set by the systems. One could study different networks of influence and (in)dependencies, self-censorship, the extent to which social and cultural differences are expressed and repressed by the system or the organisations, and many other things. For this kind of research, built-in versioning history, commit history and other analytics may for instance be useful to understand how particular issues have emerged, and how their solutions are constructed. This is what Richard Rogers called “in-built reflection or reflexivity” in the context of Wikipedia (Rogers 2009, 28).80

As the largest code-hosting platform, GitHub is now the main place where developers working together on open source projects organise themselves. Reputation on this platform has become a currency that has real value outside the digital community as well as within it (Dabbish et al. 1282). Because activity is public, it becomes a space where developers are recruited based on their levels of commitment, quality and number of their commits, and the type of projects they are associated with. Reversely, based on profile information such as organisation and names, developer recruitment on GitHub can also follow from real-world credentials. Another consequence is that, like other collaborative endeavours such as Wikipedia, one of the recurring issues is the assurance of quality control of shared knowledge. As on Wikipedia, this is achieved in part by what Morgan Currie called “commons-based peer production” (226–227),81 which emphasises consensus-building over the quality of a single version. Issues are resolved in disconnected instances (e.g. forks, branches) and are later merged with the master branch. This happens in an environment of organisational hierarchy (e.g. upstream, pull or merge requests, access permissions), but versions can always be forked or rearranged into different assemblages and different organisations. In contrast to Wikipedia, GitHub is also not expected to uphold a certain level of consistency and quality control over all its contents.82 Instead, these concerns are limited to the scale of the project network.

The social in social coding represents that we do not only look and imitate others, but we can actually learn from that. This is why we are able to accumulate knowledge and increase the pace of learning (Pagel 2011). Smaller social groups usually equals less innovation, less imitation, and less social learning. Git causes collaboration to be fragmented into communities, but these are not mutually exclusive but nested (section 4.3.). Through watching, following, public activity streams, notifications, commit contributions, or discussions you can be part of many different groups at the same time. This means that social learning can easily and effectively spread across these disconnected networks that are connected through the activities of developers. The rate of social learning and accumulation of knowledge highly increases through adaptation and imitation. This raises questions regarding the relationship between the number of languages on GitHub and what this means for the pace of innovation. In natural languages, for example, language is considered as a barrier surrounding and protecting a culture and its identity and knowledge (Pagel 2011). These rings of language tend to slow the pace of adoption of ideas and therefore also the pace at which they flow through a global society. As a hub of collaborative activity, GitHub removes many of these barriers, but to what extent does this theory hold when different programming languages are deployed? At the very least, the interface is standardised; across the globe, everyone is subject to the same grammars of action. Formal artificial languages (e.g. programming languages) also remove some of these barriers of natural language, which would otherwise be a big issue for “global software development”. Clearly, there is much more territory worth exploring.

7. Conclusion

This research contributes to and elevates the contemporary academic debate on technicity as part of the broader project of software and platform studies. As such it informs the design and implementation of platforms that try to harness the many ways in which systems and information transparency can support collaborative innovation, knowledge sharing, and community building. By examining the constitutive relations between technical infrastructures and specified human actions, I have tried to show that software has the ability to produce and instantiate modes of collaboration that are specific to the environment in which that software is implemented. Systems of storage and versioning, access protocols, grammars of collaboration, social regulation, “social learning” (Bandura 1977), activity streams and visualisations, and so on, shape the conditions for these context-specific kinds of innovation, knowledge sharing, and community building to emerge.

As a distributed system for revision control and source code management, Git prefers modes of collaboration that are episodic or continuous. This resulted in a different approach to projects as nested code assemblages to which communities are attached, and with issues acting as hooks. Among the implications is the replacement of software release cycles by “perpetual beta” (O’Reilly 2005), and cumbersome organisations by task-oriented development neighbourhoods that are formed because code is a social object that structures collaborative efforts. Free distribution and access to source code and implementation are essential to collaborative effort on GitHub, which lead me to rephrase the question of ownership as one of accessibility and permission protocols and lead to a discussion of how the public and private had been reconfigured on the Web towards a “neodemocracy” (Dean 2003). Implications of this shift concern the central role of issues as units of collaboration, and how these issues are tracked and integrated into the platform as searchable units or through task allocation and issue management. Because issues and tasks are concrete units of collaboration, they can be measured (e.g. lines of code, difficulty, date stamps), compared (compare view, blaming, merging), allocated (e.g. to trained professionals, amateurs), and processed by the system (e.g. activity metrics). This leads to particular forms of knowledge sharing and learning such as disconnected issue solving, tiny snippets of code, or watching watching. Information transparency and activity awareness can greatly enhance the coordination of distributed efforts. As a result of a predefined grammar of collaboration, every action can have “an equal and opposite reaction”83 from the system that can be distributed (e.g. visualisation, notification, stream), which instantiates social regulability enabled by recursive awareness, and resulting in a complex system of social values such as status, skill, and reputation. In the discussions of social forking, Gist, and (re-)merging, it became clear that knowledge is shared through activity feeds and other automated systems that capture this activity. Information transparency and activity awareness make communities and their efforts visible and create opportunities for social learning and source code scrutiny.

I propose to continue these efforts to develop a “thick” understanding of software and platforms, and the critical role that systems and code play in shaping the technicity of our everyday lives and problems. There is relatively little research on the role of software in this regard. At least not on how software is constitutive as both product and process; how code emerges. This is also why we need more situated studies to show how technicity has implications on the micro-level, and in order to understand to what extent a system interpellates people to its logic. Software is not just supporting human faculties; it is rather a complex constellation of negotiations between humans and technology. The implemented features convert collaboration into a coded (programmed) concept, which leads to workflows that are specific to the GitHub environment (e.g. light-forks, social forking, episodic and continuous collaboration, issue solving in disconnected instances, the use of feature branches, (re-)merges, the fork and pull workflow). Collaboration becomes in an informational environment of global/local mirroring of captured information, which also imposes particular grammars of collaboration. Specific technological constellations are constitutive of particular kinds of networks such as knowledge-based social learning networks, and task-oriented development communities, where projects exist as nested code assemblages, to which communities are attached through issues. On the other end, we also need studies that focus on larger power relationships that shape the development of platforms or software as part of larger “ecosystems” (van Dijck 2013; Langlois 2011; Gillespie 2010). Related to this, and as van Dijck also stressed, we should also be deconstructing what meanings developers impute into the platform, its goals and its features (2013, 11). Technologies will keep changing, but an understanding of coded incentives could indicate broader discourse networks and provide insight into which direction the platform might evolve in the future.

8. Acknowledgements

Many thanks to Carolin Gerlitz and Esther Weltevrede for their research seminars, helpful comments throughout the writing process, and also for ensuring that this work could be submitted in English. Thanks also to those peer students who commented on earlier drafts. Last but not least, I am grateful to Jessica de Jong and Anlieka Marconi for their comments and taking the time to look over the structure, spelling, and grammar of the English writing.

9. Endnotes

- Chris Wanstrath, P. J. Hyett, and Tom Preston-Werner. GitHub. GitHub, Inc., 10 Apr. 2008. Web. 10 Feb. 2013. <https://github.com/>.

- Rob Sanheim. “Three Million Users.” The GitHub Blog: Watercooler. GitHub Inc., 16 Jan. 2013. Web. 22 Mar. 2013. <https://github.com/blog/1382-three-million-users>.

- See also Bucher 2012; Dodge and Kitchin 2011; Niederer and van Dijck 2010; Mackenzie 2002.

- It is worth mentioning that Caroline Bassett argues that there is a division between UK-based or European critical approaches (such as Fuller 2008, 2003; Rieder 2013; Berry 2012) and US-based de-politicised formalism (such as Manovich 2011) in relation to canonisation (2012, 122).

- See also Gillespie 2013, 2010.

- See also Gerlitz and Helmond 2013; Rieder and Röhle 2013; Weltevrede and Helmond 2012; Niederer and van Dijck 2010.

- Paraphrasing Adrian Mackenzie (2002), Martin Dodge and Rob Kitchin describe technicity with an emphasis on the social context (which is always more than just a backdrop): “Technicity refers to the extent to which technologies mediate, supplement, and augment collective life; the unfolding or evolutive power of technologies to make things happen in conjunction with people” (42).

- Cf. Dodge and Kitchin 2011, 59–61.

- See also Cronin 2003, 55.

- Chris Wanstrath. “We Launched.” The GitHub Blog: New Features. GitHub Inc., 10 Apr. 2008. Web. 1 Apr. 2013. <https://github.com/blog/40-we-launched>.

- See also de Alwis and Silito 2009.

- See also Benkler 2005; Wood 2010; Biddle et al. 2003; Ripeanu 2001.

- See also Harper and Kivilinna 2012; Loeliger 2009; Yip, Chen, and Morris 2006.

- Following in the footsteps of an earlier approach to group support and understanding system requirements called “office automation” had run out of steam (Grudin 1994, 19).

- See also Goguen 1997; Schmidt and Simone 1996; Rienhard et al. 1994; Schmidt and Bannon 1992.

- See also Dabbish et al. 2012; Heller, Marschner, Rosenfeld, and Heer 2011; Sarma, Redmiles and van der Hoek 2008; Redmiles et al. 2007; Sarma and van der Hoek 2007, 2003; Gross, Stary, and Totter 2005; Viégas, Wattenberg, and Dave 2004; Carroll et al. 2003; Schmidt 2002.

- See also Galloway 2004.

- See also Harper and Kivilinna 2012; Stallman 1985.

- See also Moran 2009; Dirlik 1996.